Continuously improving the Help Center to make sure it has the content that users are looking for is important to us, and getting actionable data on what content is missing or could be improved is very valuable.

To this end we'd like to collect feedback from users when they read a Help Center article, but didn't find it helpful or what they were looking for (or they spot a mistake, etc), why they didn't find it helpful.

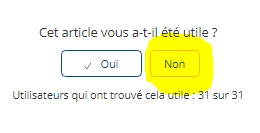

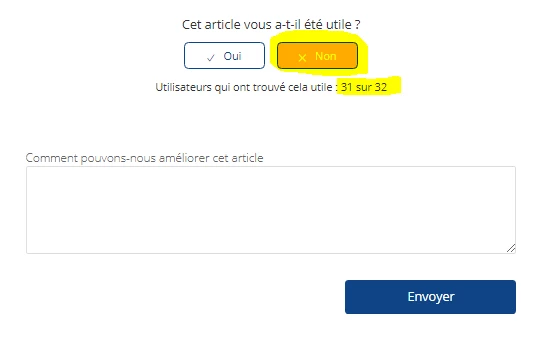

Current implementation of Was this article helpful

The current implementation has limitations:

- user cannot tell us what they where looking for/was missing, they can only vote on 'helpful/not helpful'

- reports on feedback are very basic - stuff like page visits vs. votes vs. time period are missing

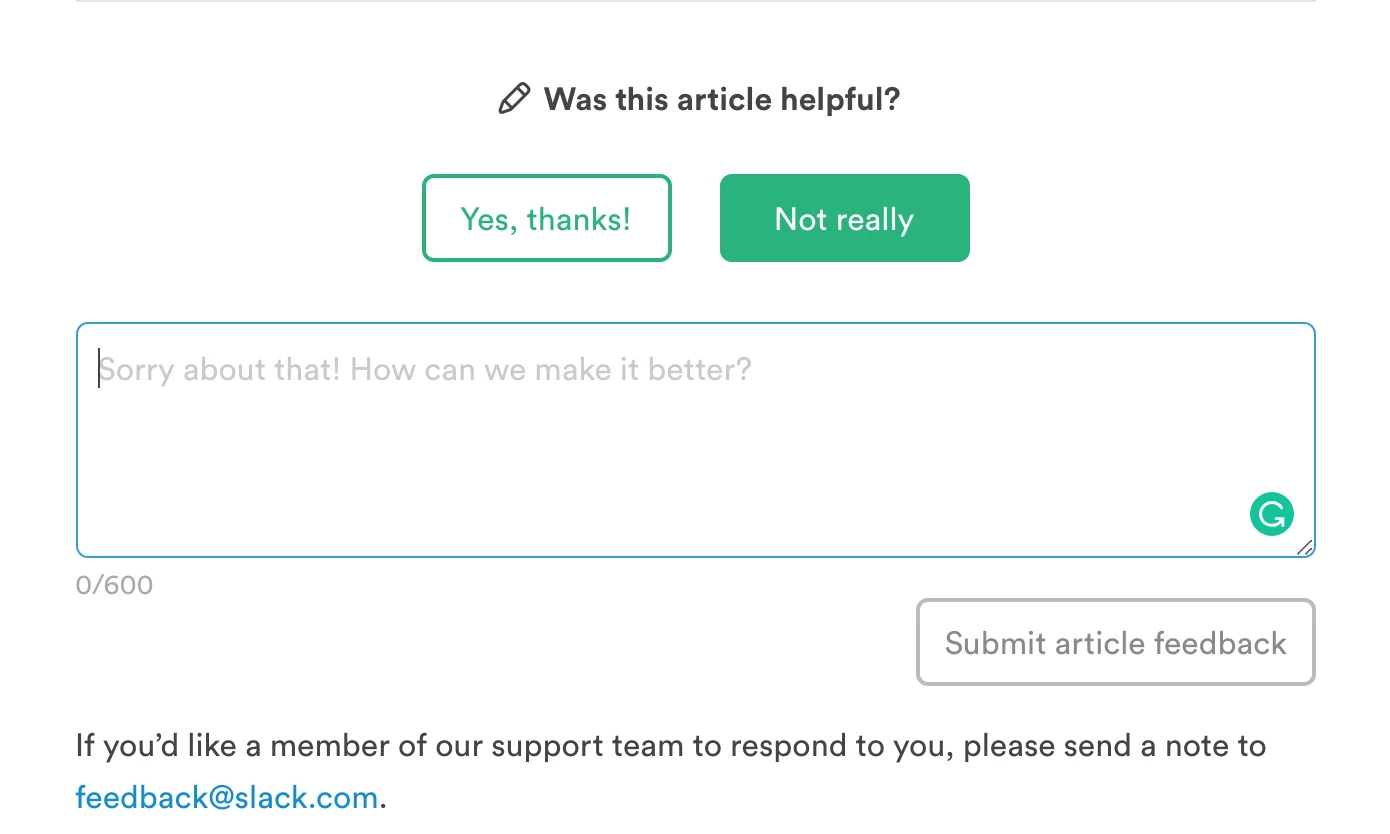

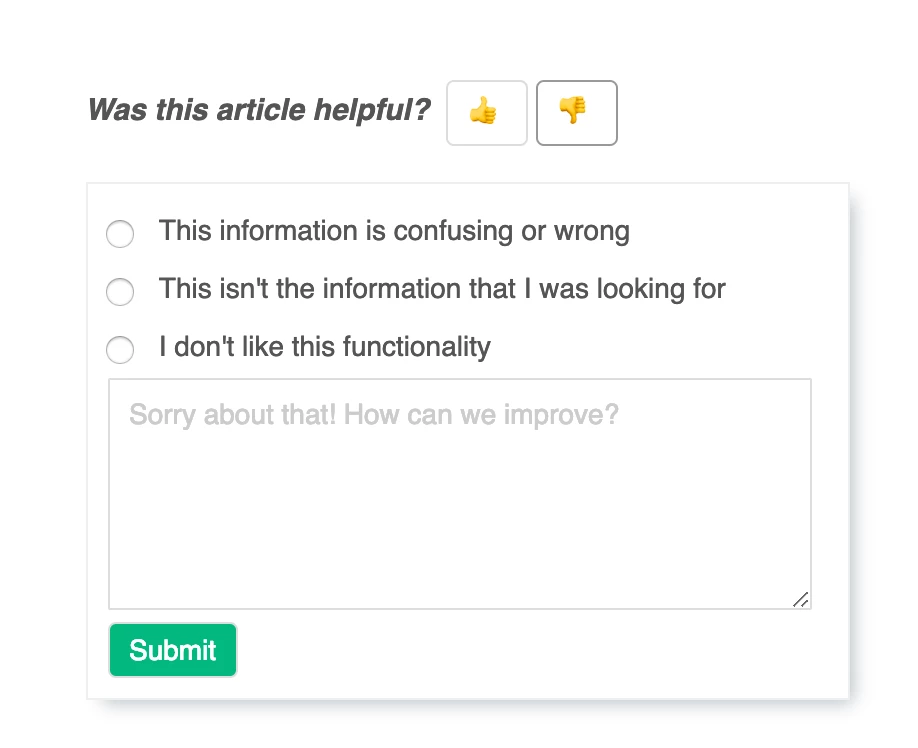

Example implementation with reasons

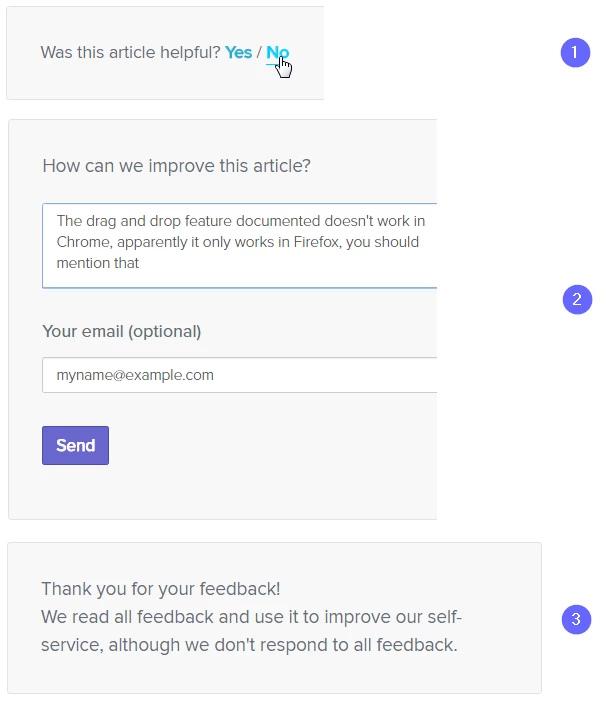

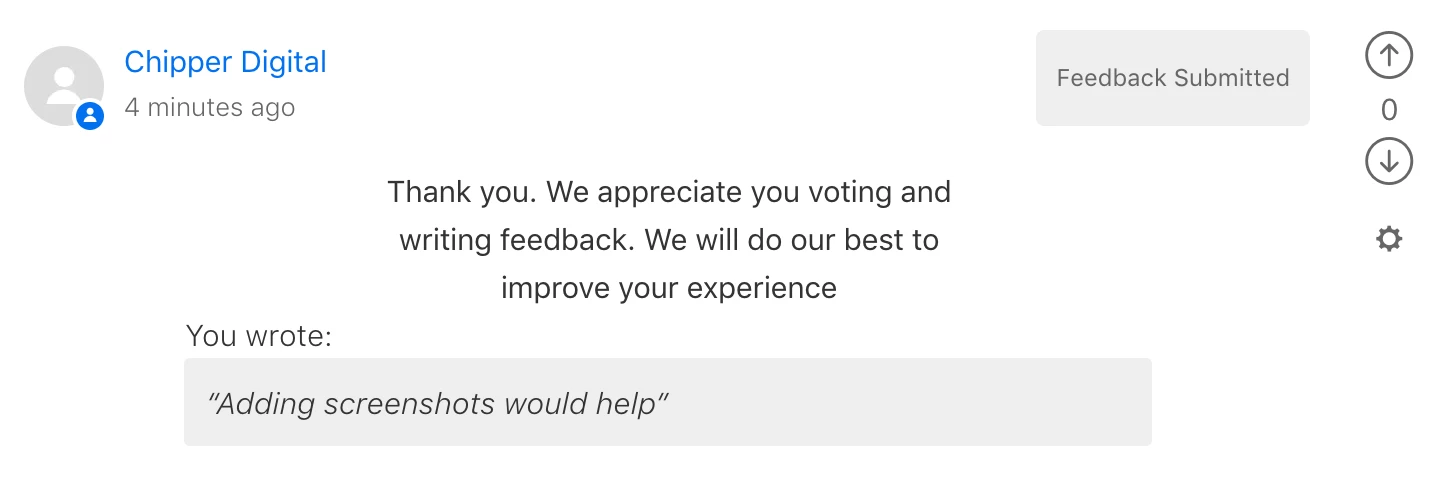

Here is example taken from another site that we shows how the end user experience could be:

Data we'd like to collect

Stuff like

- article name

- article URL/link

- the feedback text

- the user email (optional when leaving feedback, but if provided, would allow us to follow up with that user)

- date and time

- and nice to have: Browser type and IP (in case it might be browser related)

EDIT: When I wrote this feature request, we didn't have anonymous voting. This has since been delivered, so I've updated the request accordingly.

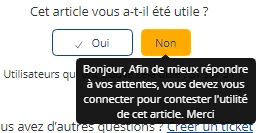

when we hover the button no , a tooltip appears , saying that you must be connected to add a negative vote

when we hover the button no , a tooltip appears , saying that you must be connected to add a negative vote

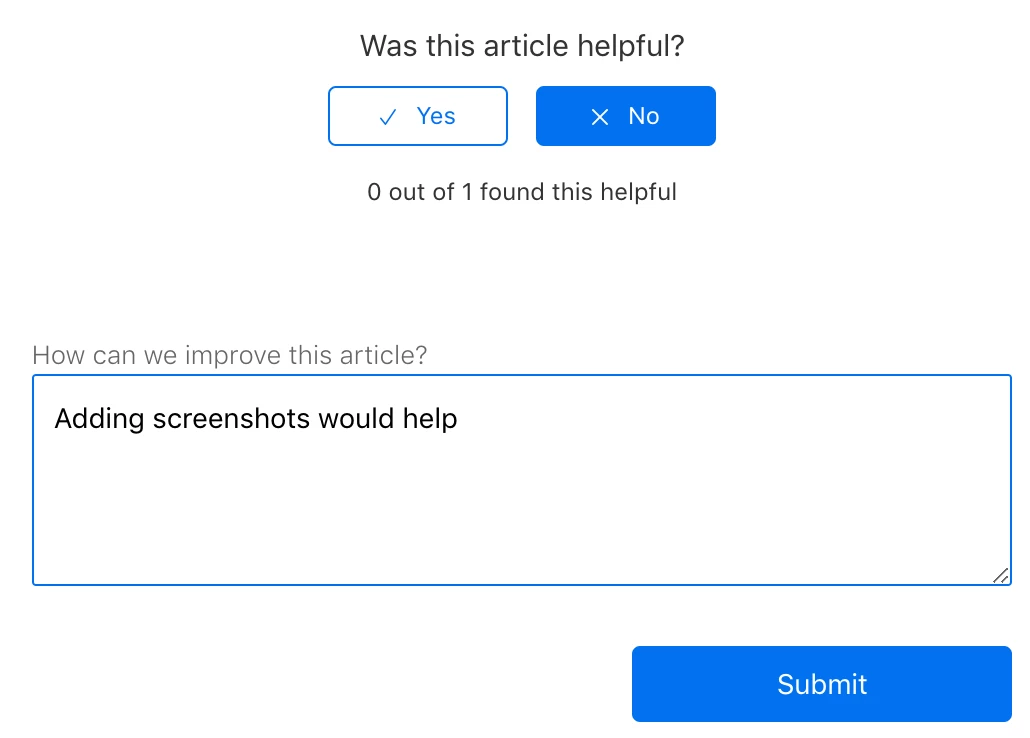

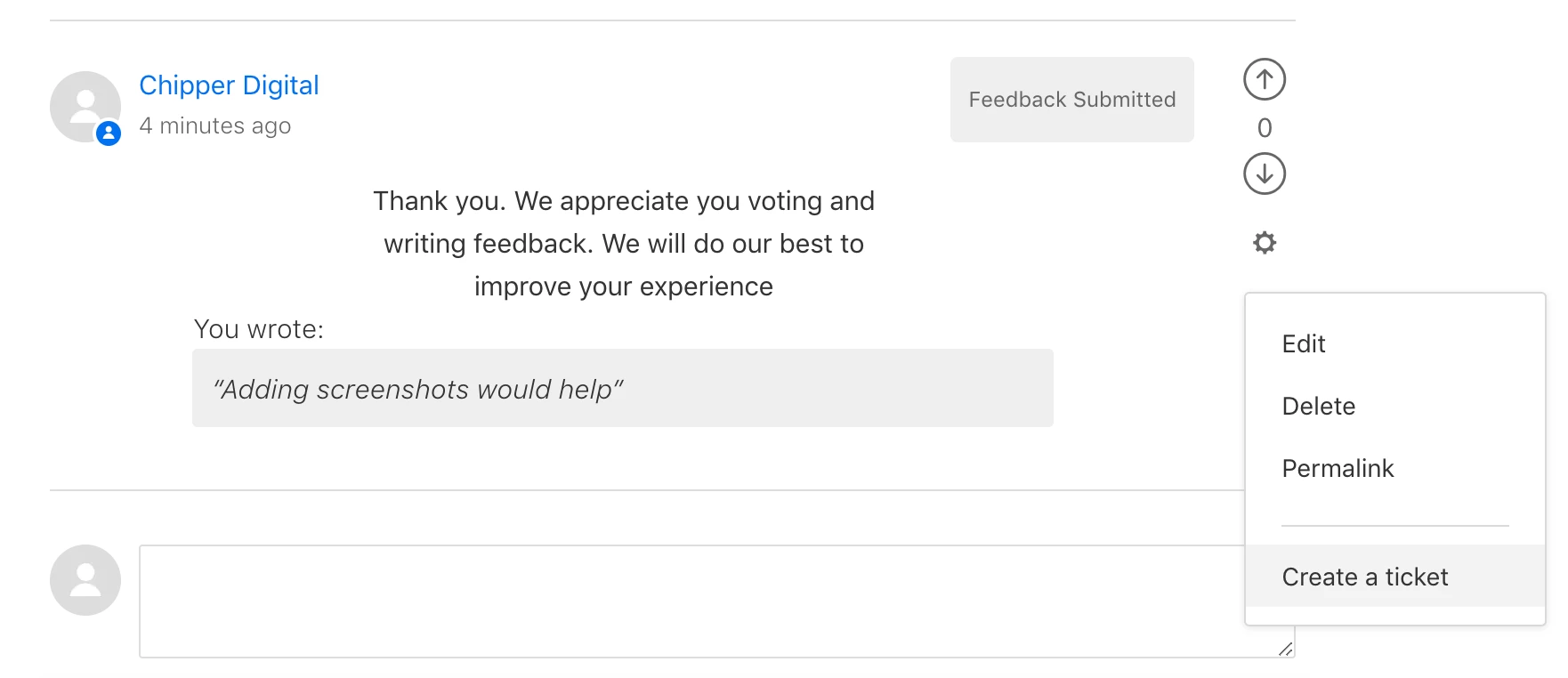

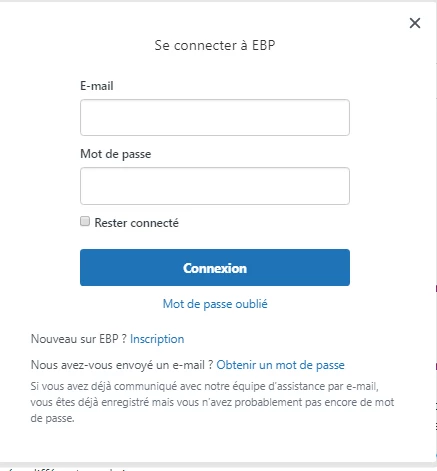

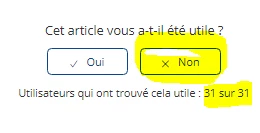

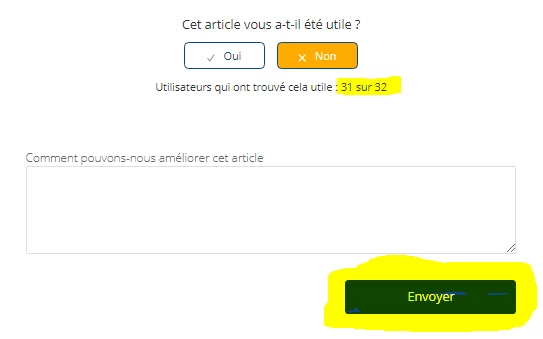

when a user clicks the "No/downvote" button, the vote count goes up, and the comment form appears.

when a user clicks the "No/downvote" button, the vote count goes up, and the comment form appears. and the problem is that if the user leave the page or refresh it , the vote count still we the updated amount ( here 32) even if he hasn't submitted the form.

and the problem is that if the user leave the page or refresh it , the vote count still we the updated amount ( here 32) even if he hasn't submitted the form.

Hi Benjamin!

Let me know if I understand you correctly: when a user clicks the "No/downvote" button, the vote count goes up, and when the "Yes/upvote" button is clicked nothing happens at all? Is that right?